A remix takes the original version and edits or recreates in order to sound different from the original version. Below is a remix and response to the Digital Writing: Assessment & Evaluation Annotations created by Maury and Leslie.

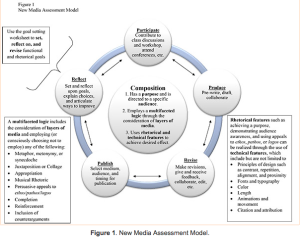

I chose to respond to Maury’s entry on Crystal Van Kooten’s article, “” Toward a Rhetorically Sensitive Assessment Model for New Media Composition,” as the article was practical and a great example of digital (and multi-modal) writing. I have always been hesitant to assign multi-modal assignments because of assessment issues. Van Kooten managed to present an assessment model for multi-modal writing that is practical. I especially liked that she involved students in the assessment process via self-assessment and reflection. With an increase in multi-modal composing, it is important for students to know how and why they make decisions when writing. The self-assessment and reflection can help them to understand their rhetorical choices. Van Kooten shares that the first attempt was unsuccessful due to the rubrics, as Maury states, “being outgrowths of the print media rubrics.” With digital writing and composing in online spaces, we often resort to using the same tools and strategies even when they do not translate well into a new environment. Van Kooten’s model reminded me of our first discussion topic: the rhetorical situation. I recall a few of us mentioned that we would like to utilize Biesecker’s approach to the rhetorical situation in our composition classes, but we often end of teaching Bitzer’s approach to the rhetorical situation. Van Kooten provides an approach to new media composition that presents the complex and interactive rhetorical situation that Biesecker discussed in his article. Van Kooten’s model makes connections between the technical features and rhetorical strategies; the rhetorical situation isn’t a flat author-subject-audience.

Crystal Van Kooten’s model of New Media assessment of multi-modal compositions.

I chose to respond to Leslie’s entry on Brunk-Chavez and Fourzan-Rice’s article “The evolution of digital writing assessment in action: Integrated programmatic assessment,” as it was a case study and provided a concrete look at digital writing assessment. I like the idea of being able to see things “in action.” I was immediately drawn to their argument about redefining what counts as writing and preparing students for writing in new mediums. Before I started teaching at Christopher Newport University, I taught exclusively online. Although the courses were all online (some asynchronous and some synchronous), many of the students had no idea how to write online. They were unfamiliar with how to write on a blog or discussion board. They were also unfamiliar with visual rhetoric. A part of my problem, and this connects back to Maury’s entry, was that we were required to use a course wide rubric that was not designed for digital writing. I like that the digital writing assessment system allowed students to have an audience outside of the instructor. I think that the other 4 outcomes are important, but helping students to understand the importance of audience awareness greatly impacts their writing (both process and product). I agree with Leslie’s evaluation. University of Texas at El Paso’s (UTEP) program sounds wonderful, but I do not think that it is replicable. Cost and labor issues are important factors to consider. The Writing Program Administrator would have to have the funds available to implement all these programmatic changes. In addition, there are already labor issues in regards to adjuncts. The downsizing of adjunct staff and the increase in class size does not sound like the best approach. This seems like a step in the right direction; however, I do not think more students, less instructors, and more technology is the answer.

Minerwriter Instructions

Here is information given to students about the purpose of MinerWriter and how to access it.